AprilTags hard at work as a part of scanning automation. Custom stands and holders allow for more robust registration as well as automatic photometry automations related to scaling and exposure related metrics.

These are particularly useful with Photogrammetry based pipelines. As a part of scanning toolkit there is a whole library of these that not only help to automate tie point cleanup but now we also use these to automatically estimate proper exposure compensations.

Doing some more ESD tests to destruction. Though the piezoelectric discharge doesn't quite match the HBM (Human Body Model) but it's something in-between MM (Machine model) and CDM (Charged-device model). The video doesn't quite capture the spark but it can easily reach 20-30 kV.

After a lot more testing and abuse the main MCU chip has died though all other parts stayed in tact. In some earlier versions, before putting ESD protection in place, I had many parts failing here and there. Quite easy to get something working but having it work reliably is hard.

2023 has been quite an amazing and productive year. While focused on lower-volume production we are grateful too all our early customers and clients. There were delays and unexpected shortages but we are well set for a much larger deliveries in 2024.

Happy New Year Everybody !!!

It always feels great to get good looking Quality Control pictures from a factory right on NYE!

Finally got a chunk of time to redesign the aluminum extrusion enclosure for easier assembly. Split two symmetrical parts. Managing thermal interfaces and mounts in a single piece version is/was quite a bit painful during the assembly.

Packing up the last hardware batch for this year... just before X-mas. A set of high speed FPMs with adapter rings.

Ready to pack 12-light rig parts including the structural grid, custom 100W LEDs with filters, electronic Fast Polarization Modulator and integracted syncBoxes/Drivers.

PBR Processing example alongside Metashape photogrammetry

An example of using our photometric PBR solver within the Metashape photogrammetry pipeline. For the purpose of this demo the source footage was downsampled and we specifically didn't rely on Pro features of Metashape. Same for Reality Capture one.

Using original resolution footage takes a bit longer than that and building a typical 30-40 GB dataset would take around 60-90 minutes on a relatively beefy PC with 16+ CPU cores, NVMe SSD, 128 GB RAM and RTX30XX+ when building 4-8k PBR texture stacks.

The final 3D model is available on Sketchfab https://skfb.ly/oFRxT

Behind the Scanning Scenes

Behind the scanning scenes. Running basic photometric sequence triggered by Genie Mini 2. This mode is compatible with any 3rd party turntable. More efficient modes available via direct control and usually used with electronic shutter for high fps.

The whole reason to use LEDs as opposed to Speed Lights is to be able to work with a wide range of rolling-shutter sensors that don't work well with non-constant light sources. A typical shutter life of a DSLR/Mirrorless cameras is slightly over 100,000 shots.

Sometimes 200k, 300k. That makes mechanical shutters non-practical while electronic ones allow for higher frame rates as well. In this video the mechanical shutter is mostly used for the sound. We use electronic one in practice with a black backdrop.

Scanned 3D Model of this awesome Thursday Rebel Boot is available on Sketchfab https://skfb.ly/os8ON

Rig Close-ups

High-res close-ups of a 12-light rig. Mounted on a GODOX 240FS Light Stand and driven by Syrp Genie Mini 2 turntable. One of the most basic configurations that's easy to move around and actuate. Important to has as little turntable footprint at possible to minimize light occlusion.

Can drive a number of off the shelf HW for a more time efficient sequencing. In the most universal configuration like what's shown here, it can be driven by any turntable or other triggering equipment in a "slave" mode wirelessly or via standard trigger cable.

Cross-platform pbrScan App

Making sure it all works across a wide range of devices with consistent UI/UX across multiple platforms. That's hardware/rig configuration and capture software.

12-light setup

12-light setup targeted to augment existing photogrammetry rigs with addition of advanced Disney PBR material reconstruction. This particular setup is great for prop and flat 2D material scanning. Compact, portable and could be powered by off the shelf 12V Power banks.

User Manual Work-In-Progress

Working on some of the "User Manual" graphics. Surprisingly, I am quite happy with #blender Freestyle rendering features.

12 Light Photometric Kit

Our standard 12-light photometric setup with 2 daisy-chained syncBoxes. 1 input and 2 output stereo high speed opto-isolated IOs, 2 channels of independent FPM control or a single 2-phase 12V stepper and 8 output 100W (30V 3.3A) LED channels with up to 240 fps capture rate.

The video demonstrates "single view" manual trigger along with generic support for pretty much any off the shelf turntable. Genie Mini 2 in this case.

Motorized Tilta CPL support

Electronic FPM vs Mechanical Tilta CPL - calibration and wireless control. While Fast Polarization Modulators (FPM) can provide superb speeds and image stability the price and manufacturing of anything larger than 5x5 cm becomes an issues. In those cases, our scanning system does support motorized cirtular polarizers from Tilta at the cost of slower switching speeds.

FPMs, lots of FPMs

Another production batch has arrived. Optical quality glass with AR coating for fast switching polarization applications. These 5x5 cm modules are suitable for lens apertures of up to 58 mm in diameter.

Sketchfab Staff Pick

Scanning anything wobbly and non-rigid is quite tricky on many levels. Handling (the process), registration, alignment. This scan is done with 30 MP sensors. Switching to 60 MP in progress.

Destructive Scanning

In some cases it's a combination of multiple passes along with some modeled on top of the scan elements that's required to make high quality photo-realistic 3D assets.

Once in a while things have to be broken apart. Destructive PBR #scanning and real-time #Blender / #EEvee real-time #rendering.

Sketchfab Staff Pick

Steampunk decorative goodness :) Scanned using our older 8MP setup with full photometrics. This prop has a nice mix dielectrics and a variety of metallic paints.

Skydance / Marvel Game

Some of the recent stuff we've been involved with. Scanning photo-realistic props and PBR materials using advanced #inciprocal photometry tech. Real-time rendered in #UnrealEngine5

Four Heroes. Two Worlds. One War. @MarvelGames and @Skydance New Media are proud to share the first look at our new AAA game with an ensemble cast and original story set in a unique Marvel Universe.

Aquiring full Disney PBR materials and complex shiny geometries without spraying. Creating digital product and prop doubles that enable photo-realistic real-time rendering in Unreal Engine 5.

PBR Materials for Realistic Characters

We are finally getting closer to bring our PBR material scanning tech to a larger group of studios, supporting a variety of commercially available and custom made light rigs, performing all the heavy processing on local GPU powered servers.

This particular scan can been captured Ecco Studios LA, utilizing Esper light cage rig and IO Industries Inc. machine vision cameras with our custom #inciprocal optimized 32 light scene sequence and advanced photometric processing.

The same production proven pipline for realistic prop scanning but optimized for multi-cam / multi-light conditions. No need to "monkey" with the data and lookdev. Just plug-and-play standard PBR materials.

3D Model is also available @Sketchfab for inspection https://skfb.ly/ovSwp However it's recommended to render with pre-integrated sub-surface-scattering techniques. The ones that don't "blur" the albedo texture in any way.

Scanning the Enemies

Working on the latest @Unity's Demo team Enemies demo was a blast. On our end a result of many years of advancing photometric processing to enable photo-realistic rendering in real-time. On the left is Unity HDRP vs one of the real photos under the same lighting condition.

In collaboration with @Infinite-Realities that involves thousands of shots of an actor under various lighting conditions at high frame rate using carefully crafter spatio-temporal sequencing ...

A full Disney PBR texture stack is reconstructed that usually takes around 40-50 minutes on a single @NVidia RTX 3080. There is an example of raw as-is output from our photometric processor. The data is plug and play. No extra tuning needed.

The specular component is perhaps the most characteristic one for the face that requires at least two GGX lobes to render realistically. Here is an example of some of the RAW render vs real footage. The second image is a rendered spec of the raw processed material data.

And last but not least, on the diffuse shading side of things the Modified Lambertian model is a must ... Something that we discussed a while back. Most of the real-world surfaces that exhibit a fair amount of sub-surfaces scattering are non-lambertian. Simple dot(N, L) doesn't fit. Modified Lambertian Shading takes care of that.

Check out the final demo on Youtube: https://youtu.be/eXYUNrgqWUU

Enemies Unity Cinematic Teaser

We are very glad to be a part of that effort and pleased to see a great degree of flexibility of @Unity High Definition Render Pipeline that allows easy integration of advanced photometric data.

It's Face Day @ Ecco Studios in LA and yes ..., that is a nuclear reactor. The data from our advanced Light Stage processing is coming.

Pro Weightlifting Glove

Got some new gym gloves last Friday and did a quick demo scan after realizing how many different materials and detail is in there.

A previous pair lasted a couple of years so I though it is definitely a good time to scan it while it's still new. The material variety is pretty cool in there: plastics, velcro, Genuine and Suede leather (for the palm), a bunch of synthetics like various variations of Cordura...

Didn't pay too much attention to modelling so just put a bit of paper in there and a piece of wire for mount.... the shape is not that great, so I just released it for free. Will figure out a better way to mount it for a commercial version though.

Also, because of that, some parts moved a tiny bit that resulted in some texture blurring though it's hard to see.

Took about 90 minutes to do 90 perspectives (3 full turns 30 steps each) that were used for standard photogrammetry process - feature extraction, matching and view alignment. 8190 images total for custom structured light for high-poly point-cloud and geometry as well as for #inciprocal photometric tech to capture materials and perform BRDF estimations.

The Holiday Season is coming ... 🍗

#savetheturkeys

We wanted to take some time and see how far we can push the realism of capturing realism and detail of light and matter interaction when it comes to poultry. As much as we all love Turkeys we wanted to save some this year and go with Chicken instead (nah... they are just hard to find).

Anyhow, this scan took about 50 minutes to do the full capture which included structured light + photogrammetry to get the geometry and some part of the material components along with photometry to get extract PBR material parameters.

Photogrammetry was mostly used to find and register features for camera alignment, structured light to build the point cloud, since photogrammetry output usually requires a lot of cleaning and ZBrushing ... Almost 9000 photos (8736 to be exact) were taken using new single Raspberry PI HD camera that produced about 7 millon points, then tesselated, decimated, uv-mapped that further got processed with custom photometric pipeline to get all the material data that took about an hour on a single nVidia RTX GPU.

The challenging part was getting the bottom of the chicken. It had to be scanned separately and then manually fitted back to the main model. The fact that it always moved a bit ±5 pixels here and there, just settling down that had to be taken into account did not make it any easier.

Even though the number of photos looks a bit crazy, in reality it is much faster to produce a high quality scan due to its automated nature that doesn't require too much cleaning compared to other solutions. And you get all the PBR data right away.

Out of 8736 photos only 80 unique perspectives were used but those 80 ones were synthesised from 2400 input photos using light multiplexing method to allow for robust feature detection and matching. 1280 photos used for Sub-surface scattering estimation. 4000 for structured light reconstruction to save hours and hours of manual cleaning of final points and high-poly meshes in ZBrush - this way we save the need to do a lot of manual work. And finally, around 3000 photos were used for PBR material component reconstruction.

But probably the toughest part about scanning food is you get super-very-really hungry ... learned it the hard way 😁

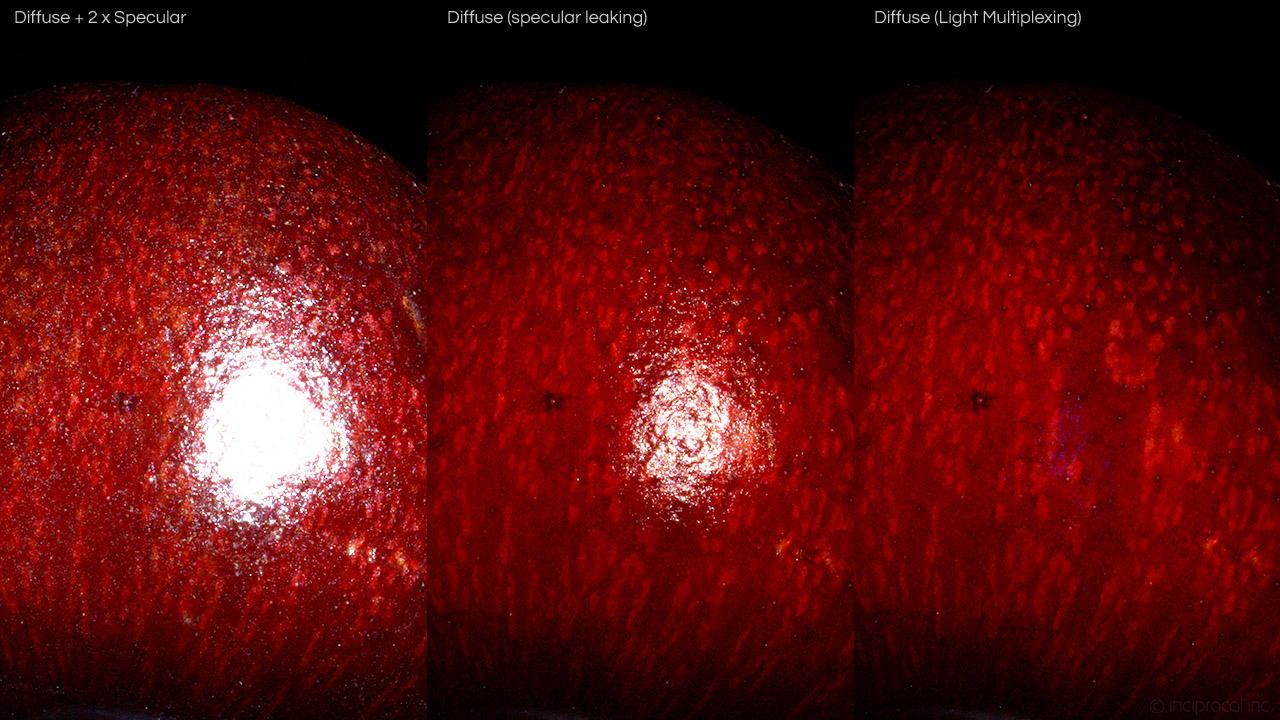

The Cherry Case

Once in a while we run into very degenerate cases that seem super trivial at first but then turn into a big project.

Have been doing some macro photometric scans of various things but Cherries took a long time to figure out. Thinking of scanning, the end goal is to produce the final asset with all sets of data required to render it properly and make it look indistinguishable from the original object when rendered under the same lighting conditions. Typically, geometry is 10, maybe 20% at best of the final asset when it comes to capturing material properties along with light and matter interactions.

The problem with Cherries is that the diffuse albedo is really dark but the surface is very smooth at micro level though has enough roughness detail that is easily visible. That detail is very patchy and sparse that makes those cherries look awesome when lit artistically, but a lot, if not most of structured light techniques fail if you only try to look at specular lighting component. The fact that the microfacets are so smooth is not helping either.

The thing that actually reflects the light is a lot smaller than the footprint of the projected feature.

The diffuse part is tough as well. The skin is so thin that there is no classical diffuse component so to speak. It goes directly into very deep subsurface scattering so structured light techniques fail too in terms of getting any good proxy geometry for further processing.

Projected features get blurred to the useless point right away.

We do use photogrammetry once in a while but this case was quite unusual. The thing is that polarization filters are not 100% efficient and the polarization effect largely depends on surface normal and viewing angles. With Cherries it happens that even if you could achieve ideal polarization across the whole visible light spectrum, the fact that normals of the Cherry skin change so much on a visible micro level make photogrammetry fail as most of the features are way too view dependent thus you get very few matches and very poor camera Alignment as a result.

For photometry and material property estimation business every not aligned camera or view means thousands of images and samples wasted - no good.

Gradient lights and other more exotic things not helping here either. Also, the surface is RED, really RED. Like rgb(255, 0, 0) red that makes 4x color camera resolution drop, as a result of Bayer filter.

Subsurface has a lot of detail for photogrammetry to work well as long as there is no any kind of specular at all. After many trial and errors we came up with a light multiplexing solution, where the most basic technique involved taking pictures of three separate offset light sources: A, B, C. Then making the photogrammetry friendly shot as max(min(A, B), min(A, C)). That essentially cancels out all view dependent effects. In many cases we use more than 3 lights but it works with 3 or even two as well.

In the end, the Cherry worked out great. Took 3 hours to take 21,000 images (only 96 were used for photogrammetry processing that were constructed from thousands of other images via "light multiplexing") taking 100Gb of disk space. The whole photometry and material estimation part took about 2 hours on nVidia Titan GPU. Mostly automated but probably took about 1 hour of manual work handling the rig, doing minor cleanups, UV tuning and low-poly mesh tuning.

This is definitely not a common case as most of the things are much more simple and take much less time to handle. But it shows that you can push easily available DIY photogrammetry scanning a step further by using more controlled lighting scenarios.

Dmitry Andreev @ inciprocal

Originally posted to (private) 3D Scanning Users Group

https://www.facebook.com/groups/3dsug

June 29, 2020

Photo-realistic graphics and real-time applications have been our passion for many years. The key goal behind inciprocal Inc. was to develop a set of technologies, robust software and hardware, pipelines and procedures to enable highly accurate data acquisition for use in photo-realistic applications such as movies, games and eCommerce along with emerging AR/VR experiences.

In our latest #sketchfab Blog Article we discuss a range of topics related to digital asset production, Movie and Game industries, the power of Digital Doubles along with a sneak peek of how a lot of that photo-realistic awesomeness is achieved.

sketchfab.com/blogs/community/seller-spotlight-inciprocal

Antique Globe on Stand is not just a good looking model but an advanced digital double of a real world product. Real photo on the left vs path traced render of low-poly model and a set of advanced PBR textures that accurately capture complex light and matter interactions.

Available on #sketchfab

https://skfb.ly/6Utsn

Cherries und Berries

Have been pushing our tech to new limits with introduction of Surface Macro mode that allows to capture extra degree of surface and light interaction details.

The essence of 3D scanning, especially for photo-realistic real-time applications, is to boil down complex surface properties to a minimal set of data, and for e-Commerce applications it's important to guarantee the accuracy of digital double replica and how well it handles under novel lighting conditions.

Not only we are able to produce a minimal standard PBR (Physically Based Rendering) data set that accurately replicates an object, but back it up with thousands of real vs rendered shots during verification process.

Demo assets available in our "Surface Macro" collection on #sketchfab https://sketchfab.com/inciprocal.com/collections/surface-macro

No meshes, no particles, no VFX.

Ran into this ... totally unexpected effect while finishing Mushroom scanning mini-project. You can't see those spores under normal lighting conditions but under very bright directional light source those Morel Mushroom spores shine like crazy. This totally reminds of The Last of Us, which is coming out soon as well 😀

More photo-realisic photometric mushroom scans are on their way to

our Sketchfab store collection

https://sketchfab.com/inciprocal.com/collections/mushrooms

Found something frightening in the deeps of refrigerator but could not resist scanning it with our photometric process and creating a themed poster.

Likely this exact same fruit was scanned half a year ago and now it makes a good visualization of how material properties changed over time.

These models are also available on #sketchfab

https://skfb.ly/6RA9G

https://skfb.ly/6SDvt

Going a bit crazy with this stay-at-home thing...

Scanning some overstocked supplies here.

"Bathroom Tissue" or anything super white and soft, for that matter, is supper challenging. As shown in the first picture, regular photogrammetry would fail easily since there are very view features available when lit for proper albedo acquisition, on top of that, most of the material look comes from small little fibers that account for proper softness look along with sub-surface scattering components. Regular structured light and even basic photometry tend to fail as well. Had to do a lot of advanced processing and optimization for real-time rendering.

As far as actual material components go, there is a bit of very-short-distance SSS baked into albedo and some other channels have increased contrast just so it's easy to see what is going on there.

Available on #sketchfab, unlimited quantities, FREE!!!

https://skfb.ly/6RzEs

Large TP Roll by inciprocal on Sketchfab

Shelter in Place if you want to live.

(NECA Terminator-2 T800)

Some of the details NECA puts in its action figures are incredible. With real-world materials including worn leather, shiny and soft fabrics, black glossy plastics, metallic paints of various kind and more.

By scanning several parts separately not only we are able to resolve high resolution geometric detail, getting up to 14k points per square centimeter that require no further cleanup, but also capture accurate material properties using advanced photometry.

Producing a good match between real footage and render using standard Disney PBR shading optimized for real-time applications.

#sketchfab @ https://skfb.ly/6QZPn

(Left is real, right is render)

It's great to see how a number of modern technologies bring new life and allow to experience various art forms in novel ways.

This amazing original Fairy artwork by Amy Brown brought to life by Pacific Trading as real-world figurines. Digitized using #inciprocal scanning process and can be played with in real-time on #sketchfab.

As usual, top is a real photo and the bottom is the same scene rendered using low-poly models with accurate materials that allow photo-realistic rendering in real-time whether it's AR/VR, video games, eCommerce or offline high quality path tracing.

https://amybrownart.com

https://skfb.ly/6QTTP

Discovering fascinated world of Steampunk Art.

(https://skfb.ly/6Qqxz)

An art style that is quite complex in terms of shapes and geometries as well as a good mix of different variety of materials. Ranging from simple and colored dielectrics, shiny and rusted metals, metallic paints and coats.

This beautiful 5.75" tall figurine by Pacific Giftware is scanned using our advanced scanning process producing assets that enable photo realistic rendering in real-time, targeting low polygon count and basic Disney PBR shading model that takes diffuse albedo, colored specular F0 term, GGX roughness and normal.

Top is the real footage and path-traced render of that exact low-poly model with 2k textures lit under the same lighting conditions on the bottom. Available on #sketchfab.

Whether creating digital doubles, customizing existing or designing new products we are able to capture a great verity of materials that are compatible with now common physically based rendering and enable very accurate visualization and re-lighting of real world surfaces in real-time.

Some of the material sampler collections (Leather,

Fabric,

Wood) are

now available on #sketchfab:

https://sketchfab.com/inciprocal.com/collections

NYE is almost here. Working hard on bringing some of our latest demo asset packs to #sketchfab and Unreal Marketplace early next year.

High-resolution decorative #pier1 faux fern real-time relighting using captured physically based materials.

Happy Thanksgiving Everyone!

This #pier1 Harvest Wood Galvanized Place Card Holder is quite challenging from a material stand point - rough wood, varnished wood, colored metal parts as well as shiny and rusty. But we got'em all 😀

Top is real footage, bottom is rendered under the same lighting conditions with material component breakdown.

#pier1 decorative Faux Fern in Glass Container.

(real photo vs scanned and rendered) along with high quality materials

for physically based rendering.

Scanning these black patent leather #adidas shoes has been a real challenge. Required a number of special setups and combining various methods together.

(real lit footage vs #inciprocal scanned and rendered)

#potterybarn potted pothos houseplant

scanning, reconstruction and rendering (20k polygons & 1k textures).

Digitizing thin non-solid assets is always a challenge, but possible with

the right tech & process.

#worldmarket decorative cactus is an example of composite scanning where spines are handled separately from the main body due to high degree of translucency.

(Left is a real picture, scanned and rendere is on the right)

#inciprocal scanning process performs multi-view, multi-channel acquisition and analysis in spatial and frequency domains, combining various aspects of structured lighting, photometry, photogrammetry and light fields to convert real-world assets into their digital doubles.

We can target various BSDFs with different degree of complexity. The image shows single view decomposition into various components:

- Specular is view depented reflection.

- Roughness is GGX linear roughness. Chocolate part is smoother that the "cake".

- Normal map is not really a "normal" since we can only talk about effective "normals/surfaces" from the point of view of light-matter interactions. Thus can have as many normals as we like depending on how we handle them at the shading stage.

- Classical diffuse albedo.

- Per channel RGB diffuse normals. Note how soft the Red is compared to the Blue. That's due to sub-surface scattering.

- SSS Total is really a combination of all scattering modes. Deep SSS, Shallow SSS, Diffuse (very very shallow SSS).

What was unexpected here is how little the chocolate part really scatters. "SSS < 0.1 mm" red channel shows how much was scatter shortly after entering the media. Sprinkles covered with thin layer of chocolate scatter very little thus are bright. Chocolate is a bit darker as it scatters a bit deeper and shows up in another frequency channel. And the donut itself reveals some lumpy structures in short range while scatters quite a lot in longer one as seen in "SSS 5 mm" channel.

Moreover, the "Diffuse Exponent" is close to Lambertian (1.0) unlike what we see in some more uniform medias: fruits, plastics, ceramics, etc...

Mmmmmonday Donuts!

(in each image top part is real, bottom is rendered)

Material properties change quite a bit when you take them out of refrigerator going from mate to glossy and back to matte as they dry out in a bit. So quite hard to get the exact match.

When doing offline rendering or baking, high poly mesh is needed. The top one needs it for better self shadowing, the front one for better scattering.

But with some advanced compression tricks going from 2K to 1.5M polygon mesh only requires 50Kb of entropy.

Scanning food is hard (too yummy)

By projecting a series of specially crafted patterns with aid of various filters and clever software analysis we are able to reconstruct a lot of internal object structures and light interactions to enable realistic rendering.

In this image you can clearly see how little the chocolate part scatters compared to the "cake", not something that we expected. More info and pics next week after tomorrow's artistic photo reference session 😀

As simple as this #pier1 decorative elephant is, diffuse albedo is nearly black with no features where traditional photogrammetry fails. Yet #inciprocal scan is able to capture accurate geometry along with proper lighting response.

(top is a real photo, the bottom one is scanned and rendered)

It's tea o'clock (coke mixed with water actually). Real photo at the top vs render on the bottom (lowpoly and subdiv).

#pier1 ceramic

While doing extensive photo referencing and validation of captured assets it turns out that standard Lambertian Diffuse reflectance is not a good fit for surfaces with strong sub surface scattering component such as plastics, ceramics, fruits and vegetables, etc...

However a simple Phong-like modification solves a lot of problems, matches reality much closer and has minimal impact on real-time performance.

Fluttershy by inciprocal on Sketchfab

have been testing high dynamic range light probe capture for a while. Seems to work quite well.

(when use for lighting rather then backdrops, alignment is not important but accurate energy conservation and spectral consistency is)

Pear Lighting Anatomy

testing the limits of conventional shading models and photometrical materials.

Bartlett Pear by inciprocal on Sketchfab